AI News Digest Bot

From 50+ tabs to one digest — automatically, twice a day

12h

fully automatic cycle, no manual input

2 formats

Telegram digest + email longread

0

duplicate articles — Airtable remembers everything

The problem

Following a topic means opening 10-15 sources every day, scanning headlines, deciding what's worth reading, then synthesizing into something useful. That's 1-2 hours of work for 10 minutes of actual insight. Most people either stop following or drown in tabs.

How it works

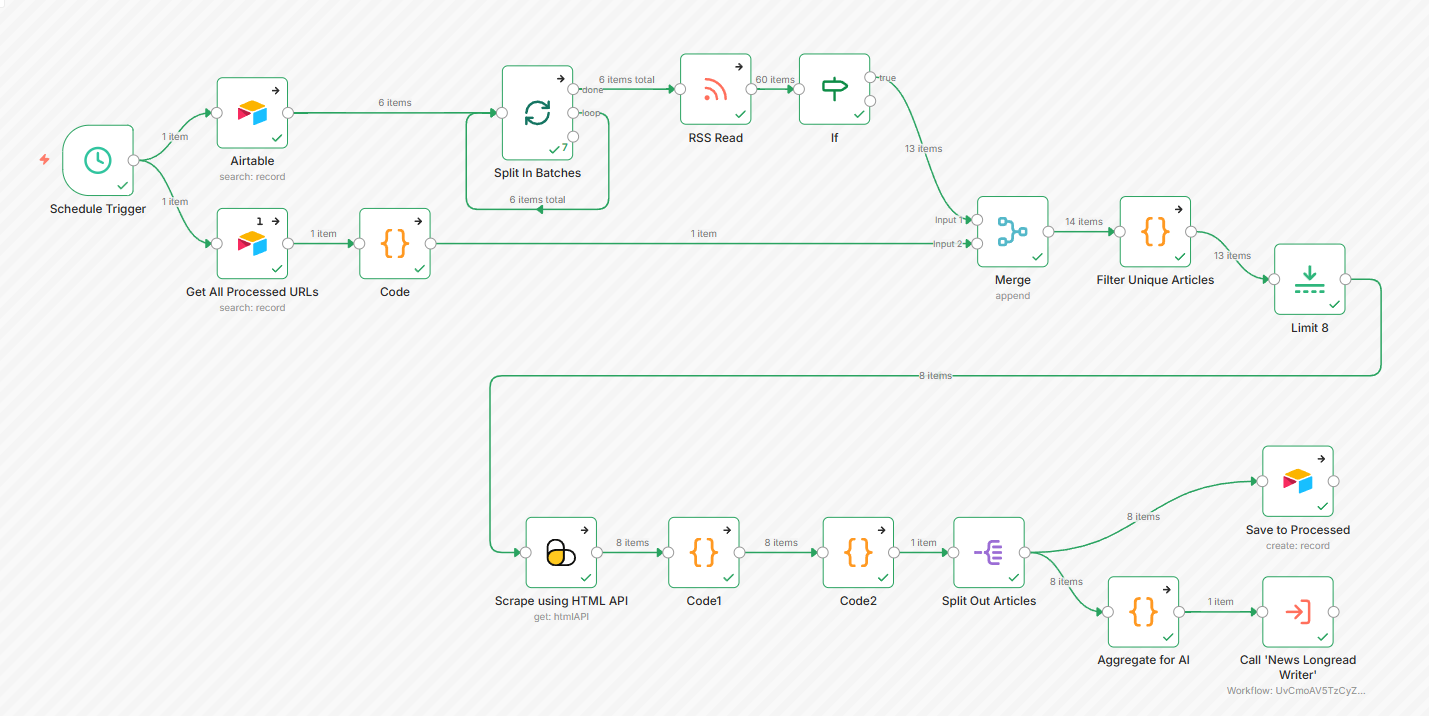

Schedule fires every 12h

Parser reads RSS sources from Airtable and fetches each feed

Articles filtered

Only articles from the last 24h pass. Already-seen URLs deduplicated against Airtable history

Full content scraped

ScrapingBee extracts complete article body — not RSS excerpts. Ads and banners stripped

AI analyst ranks

All articles read, grouped by topic, scored 1-10. Marketing content filtered out

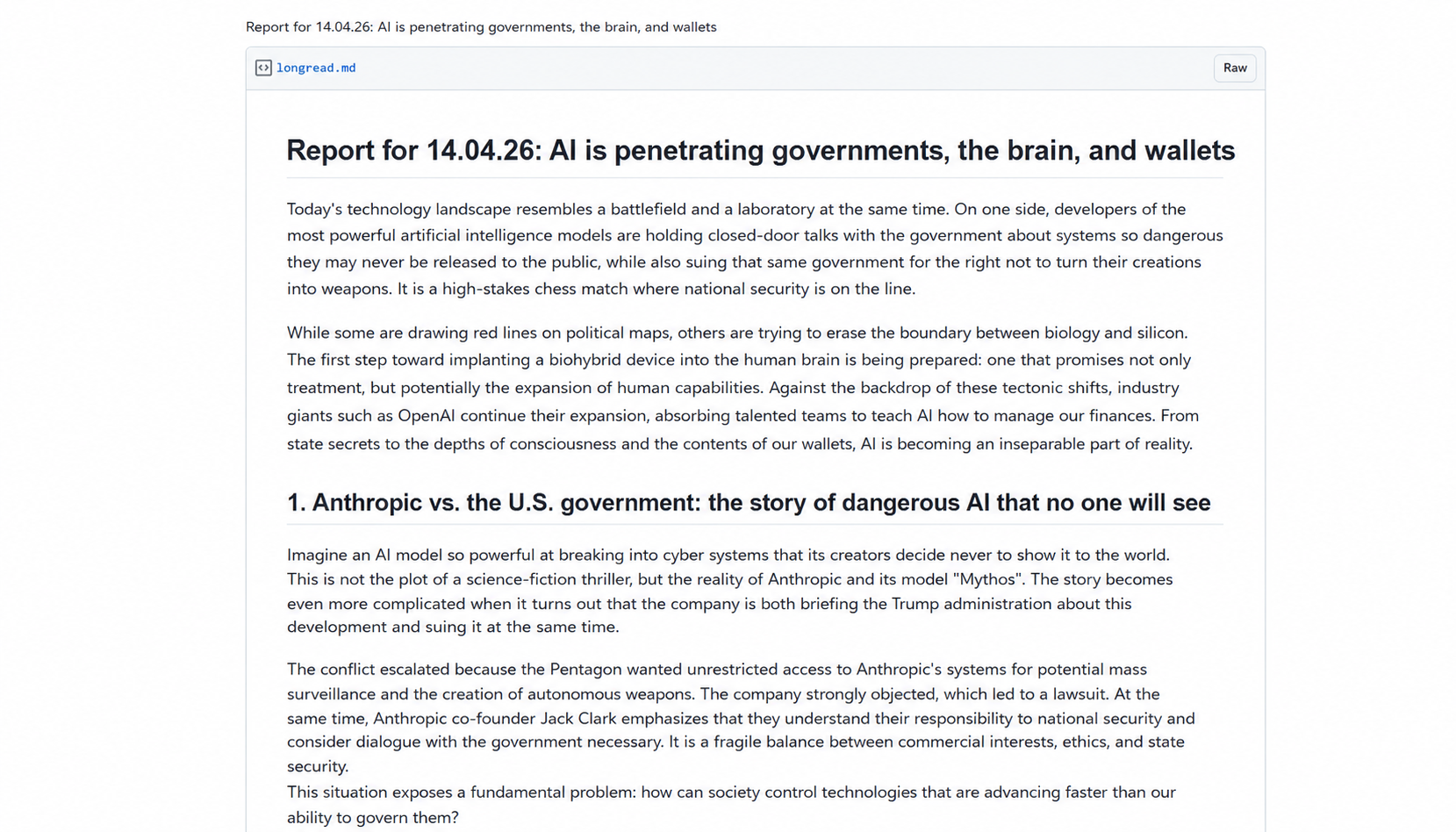

AI writer publishes

Top picks become a structured longread: intro, numbered sections, key takeaways, sources

Delivered to Telegram + email

Short digest to Telegram, full longread as GitHub Gist to email — no login required

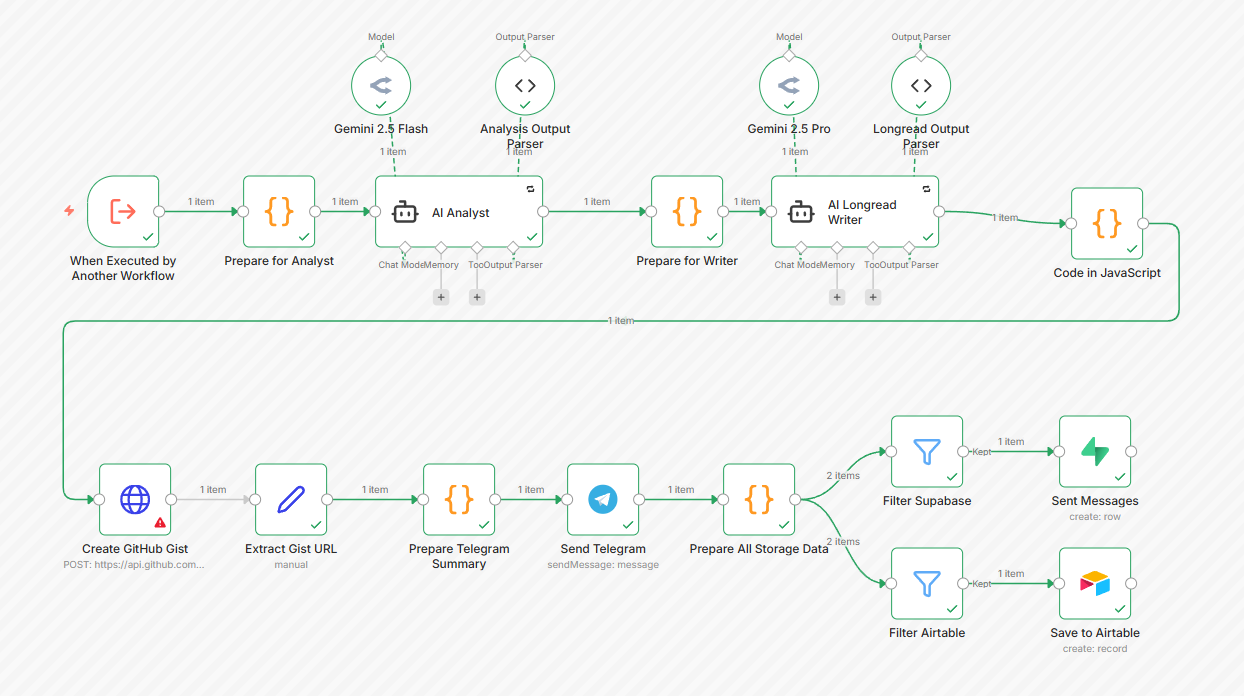

Two AI agents, two jobs

AI Analyst

Reads all articles. Groups by topic. Ranks each 1-10 by importance. Filters marketing content. Returns structured JSON: which articles matter and why.

AI Writer

Takes the ranked selection. Writes a professional longread in Markdown — intro, numbered sections per story, key points, sources, further reading.

Why two agents

One prompt trying to both analyze and write produces mediocre output. Splitting roles forces the analyst to think before the writer writes.

Structured output

Both agents return JSON via schema parsers. The pipeline doesn't break on unexpected formatting — structure validated before passing forward.

The payoff

1-2 hours → 0 minutes daily

Source-checking and synthesis happens automatically, twice a day. You receive the output — nothing to monitor.

Right format for each context

Telegram for a quick scan during the day. Email longread when you have time to go deep.

Zero duplicates, ever

URL normalization strips UTM params and trailing slashes. Same article from different tracking links still registers as seen.

Any niche, same pipeline

Replace RSS sources in Airtable — everything else works unchanged. Tech, finance, law, medicine.

What's under the hood

n8n — orchestration

Two workflows: parser calls writer via Execute Workflow. Both independent and reusable.

ScrapingBee — content extraction

AI extraction rules target article body only. Blocks ads, cookie banners, and nav before they reach the AI.

Claude + Gemini — analysis and writing

Analyst uses a fast model for structured analysis. Writer uses a capable model for long-form content.

Airtable — memory and sources

Two tables: RSS sources and processed URLs. Both editable without touching the workflow.

Open source. Adapts to any niche.

Both workflow JSONs are open. Replace the RSS sources in Airtable — the rest works without changes. Tech news, finance, law, medicine — same pipeline, different feeds.

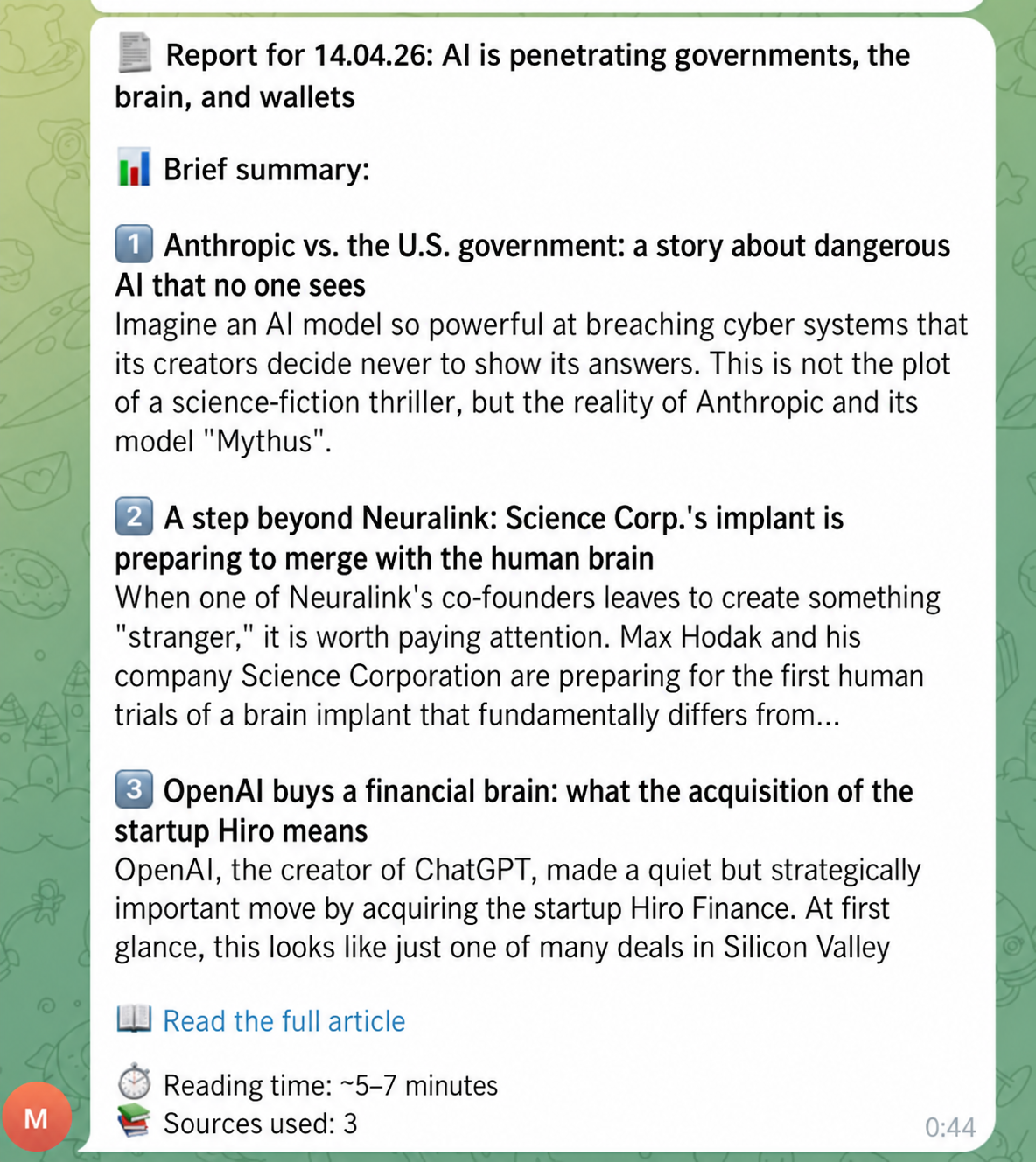

RSS monitoring

Reads multiple sources every 12 hours. Only fresh articles from the last 24h pass through.

Two-stage AI

First AI analyst ranks articles by importance. Then AI writer turns the top stories into a structured longread.

Dual delivery

Telegram gets a brief digest with key points. Email gets the full longread with analysis and sources.

Memory via Airtable

Every processed URL is saved. Next run skips everything already seen — no repeats ever.